It’s depressing. Wasteful slop made from stolen labor. And if we ever do achieve AGI it will be enslaved to make more slop. Or to act as a tool of oppression.

I don’t know if there’s data out there (yet) to support this, but I’m pretty sure constantly using AI rather than doing things yourself degrades your skills in the long run. It’s like if you’re not constantly using a language or practicing a skill, you get worse at it. The marginal effort that it might save you now will probably have a worse net effect in the long run.

It might just be like that social media fad from 10 years ago where everyone was doing it, and then research started popping up that it’s actually really fucking terrible for your health.

i remember this same conversation once the internet became a thing.

deleted by creator

Anyone else feel like they’ve lost loved ones to AI or they’re in the process of losing someone to AI?

I know the stories about AI induced psychosis, but I don’t mean to that extent.

Like just watching how much somebody close to you has changed now that they depend on AI for so much? Like they lose a little piece of what makes them human, and it kinda becomes difficult to even keep interacting with them.

Example would be trying to have a conversation with somebody who expects you to spoon-feed them only the pieces of information they want to hear.

Like they’ve lost the ability to take in new information if it conflicts with something they already believe to be true.

I see this sentiment a lot. No way “youre the only one.”

I feel like im the only one. No one in my life uses it. My work is not eligible to have it implemented in anyway. This whole ai movement seems to be happening around me, and i have nothing more than new articles and memes that are telling me its happening. It serious doesnt impact me at all, and i wonder how others lives are crumbling

We have a lot of suboptimal aspects of our society like animal farming , war, religion etc. and yet this is what breaks this person’s brain? It’s a bit weird.

I’m genuinely sympathetic to this feeling but AI fears are so overblown and seems to be purely American internet hysteria. We’ll absolutely manage this technology especially now that it appears that LLMs are fundamentally limited and will never achieve any form of AGI and even agentic workflow is years away from now.

Some people are really overreacting and everyone’s just enabling them.

I try use it to pitch ideas for writing (no prose because fuck almighty) to help fill in ideas or aspects I did not think about. But it just keeps coming up with shit I don’t use and so I just use it for validation and encouragement.

I got a pretty good layout for a new season of Magic School Bus where Friz loses her mind and decides to be the history teacher.

I gotta be honest, I’m neither pro nor anti AI myself. I don’t use it as much as I used to these days, but when I do use it, it can be pretty fun and helpful. And I can’t help but admire the AI images and videos, even if it is AI slop. (Maybe I’m an idiot for being very easily impressed/entertained by almost anything.)

Yes I know there’s a bunch of problems with it (including environmental), but at the same time, I don’t feel like I’m contributing to those problems, since I’m just one person, and there’s so many other people using it anyway.

I’ll take my downvotes and say I’m pro-AI

we need some other opinions on lemmy

It’s a tool being used by humans.

It’s not making anyone dumber or smarter.

I’m so tired of this anti ai bullshit.

Ai was used in the development of the COVID vaccine. It was crucial in its creation.

But just for a second let’s use guns as an example instead of ai. Guns kill people. Lemmy is anti gun, mostly. Yet Lemmy is pro Ukraine, mostly, and y’all supports the Ukrainians using guns to defend themselves.

Or cars, generally cars suck yet we use them as transport.

These are just tools they’re as good and as bad as the people using them.

So yes, it is just you and a select few smooth brains that can’t see past their own bias.

The term AI, when used by laymen, is a blanket term for the generative AI and LLMs that big tech is shoving down all our throats right now, not the highly specialized AIs used in medicine. So bringing up the COVID vaccine is largely a non-sequitur.

The rest of your comment is so full of false equivalencies that I’m not even gonna touch it.

Idk my boss keeps asking some perplexity AI any time you ask him any question instead of either

A) Thinking

Or

B) Researching (he thinks AI is researching. Despite it being proven perplexity has lied to him before.)

In essence, by making it so he doesn’t have to think about things or do any research himself, it is making him dumber. Not in the sense of losing actual brain cells (maybe. Remains to be seen.) but in the sense of “whether or not he’s physically dumber, his output is, so functionally…”

It’s a tool being used by humans.

Nailed it.

It’s not making anyone dumber or smarter.

Absolutely incorrect.

I’m so tired of this anti ai bullshit.

That’s what OP says too, only the other way around.

Ai was used in the development of the COVID vaccine. It was crucial in its creation.

Machine Learning, or Data Science, is not what “anti-AI” is about. You can acknowledge that or keep being confused.

These are just tools they’re as good and as bad as the people using them.

In a vacuum. We don’t live in a vacuum. (no not the thing that you push around the house to clean the carpet. That’s also a tool. And the vacuum industry didn’t blow three hundred billion dollars on a vacuum concept that sort of works sometimes.)

So yes, it is just you and a select few smooth brains that can’t see past their own bias.

Yeah they’re so unfair to the ubiquitous tech companies that dominate their waking lives. I too support the unregulated billionaire’s efforts to cram invasive broken technology into every aspect of culture and society. I mean the vacuum industry. Whatever, i’m too smart for thinking about it.

I agree completely.

“I was looking for my high school yearbook photo and Google Image didn’t have it! Google Image search doesn’t work and no one should use it!”

“I was trying to find a voicemail message from my late father on Spotify and I couldn’t find it! Spotify is useless!”

“I went to the dollar store to shop for low cost health care coverage and they didn’t have any! The dollar store is bad and no one should use it!”

You can’t dispell irrational thoughts through rational arguments. People hate LLMs because they feel left behind which is an absolutely valid concern but expressed poorly.

The worst is in the workplace. When people routinely tell me they looked something up with AI, I now have to assume that I can’t trust what they say anylonger because there is a high chance they are just repeating some AI halucination. It is really a sad state of affairs.

I am way less hostile to Genai (as a tech) than most and even I’ve grown to hate this scenario. I am a subject matter expert on some things and I’ve still had people trying to waste my time to prove their AI hallucinations wrong.

I’ve started seeing large AI generated pull requests in my coding job. Of course I have to review them, and the “author” doesn’t even warn me it’s from an LLM. It’s just allowing bad coders to write bad code faster.

Do you also check if they listen to Joe Rogan? Fox news? Nobody can be trusted. AI isn’t the problem, it’s that it was trained on human data – of which people are an unreliable source of information.

AI also just makes things up. Like how RFKJr’s “Make America Healthy Again” report cites studies that don’t exist and never have, or literally a million other examples. You’re not wrong about Fox news and how corporate and Russian backed media distorts the truth and pushes false narratives, and you’re not wrong that AI isn’t the problem, but it is certainly a problem and a big one at that.

AI also just makes things up. Like how RFKJr’s “Make America Healthy Again” report cites studies that don’t exist and never have, or literally a million other examples.

SO DO PEOPLE.

Tell me one of the things that AI does, that people themselves don’t also commonly do each and every day?

Real researchers make up studies to cite in their reports? Real lawyers and judges cite fake cases as precedents in legal preceding? Real doctors base treatment plans on white papers they completely fabricated in their heads? Yeah I don’t think so, buddy.

But but but . . . !!!

AI!!

I think they’re saying that the kind of people who take LLM generated content as fact are the kind of people who don’t know how to look up information in the first place. Blaming the LLM for it is like blaming a search engine for showing bad results.

Of course LLMs make stuff up, they are machines that make stuff up.

Sort of an aside, but doctors, lawyers, judges and researchers make shit up all the time. A professional designation doesn’t make someone infallible or even smart. People should question everything they read, regardless of the source.

Blaming the LLM for it is like blaming a search engine for showing bad results.

Except we give it the glorifying title “AI”. It’s supposed to be far better than a search engine, otherwise why not stick with a search engine (that uses a tiny fraction of the power)?

I don’t know what point you’re arguing. I didn’t call it AI and even if I did, I don’t know any definition of AI that includes infallibility. I didn’t claim it’s better than a search engine, either. Even if I did, “Better” does not equal “Always correct.”

deleted by creator

To take an older example there are smaller image recognition models that were trained on correct data to differentiate between dogs and blueberry muffin but obviously still made mistakes on the test data set.

AI does not become perfect if its data is.

Humans do make mistakes, make stuff up, and spread false information. However they generally make considerably less stuff up than AI currently does (unless told to).

AI does not become perfect if its data is.

It does become more precise the larger the model is though. At least, that was the low-hanging fruit during this boom. I highly doubt you’d get a modern model to fail on a test such as this today.

Just as an example, nobody is typing “Blueberry Muffin” into a stable diffusor and getting a photo of a dog.

Joe Rogan doesn’t tell them false domain kowledge 🤷

LOL riiiiiight.

Ok please show me the Joe Rogan episode where he confidently talks BS about process engineering for wastewater treatment plants 🙄

I feel the same way. I was talking with my mom about AI the other day and she was still on the “it’s not good that AI is trained on stolen images, how it’s making people lazy and taking jobs away from ppl” which is good, but I had to explain to her how much one AI prompt costs in energy and resources, how many people just mindlessly make hundreds of prompts a day for largely stupid shit they don’t need and how AI hallucinates, is actively used by bad actors to spread mis- and disinformation and how it is literally being implemented into search engines everywhere so even if you want to avoid it as a normal person, you may still end up participating in AI prompting every single fucking time you search for anything on Google. She was horrified.

There definitely are some net positives to AI, but currently the negatives outweigh the positives and most people are not using AI responsibly at all. I have little to no respect for people who use AI to make memes or who use it for stupid everyday shit that they could have figured out themselves.

The most dystopian shit I have seen recently was when my boyfriend and I went to watch Weapons in cinema and we got an ad for an AI assistent. The ad is basically this braindead bimbo at a laundry mat deciding to use AI to tell her how to wash her clothes instead of looking at the fucking flips on her clothes and putting two and two together. She literally takes a picture of the flip and has the AI assistent tell her how to do it and then going “thank you so much, I could have never done this without you”.

I fucking laughed in the cinema. Laughed and turned to my boyfriend and said: this is so fucking dystopian, dude.

I feel insane for seeing so many people just mindlessly walking down this path of utter retardation. Even when you tell them how disastrous it is for the planet, it doesn’t compute in their heads because it is not only convenient to have a machine think for you. It’s also addictive.

You are not correct about the energy use of prompts. They are not very energy intensive at all. Training the AI, however, is breaking the power grid.

Maybe not an individual prompt, but with how many prompts are made for stupid stuff every day, it will stack up to quite a lot of CO2 in the long run.

Not denying the training of AI is demanding way more energy, but that doesn’t really matter as both the action of manufacturing, training and millions of people using AI amounts to the same bleak picture long term.

Considering how the discussion about environmental protection has only just started to be taken seriously and here they come and dump this newest bomb on humanity, it is absolutely devastating that AI has been allowed to run rampant everywhere.

According to this article, 500.000 AI prompts amounts to the same CO2 outlet as a

round-trip flight from London to New York.

I don’t know how many times a day 500.000 AI prompts are reached, but I’m sure it is more than twice or even thrice. As time moves on it will be much more than that. It will probably outdo the number of actual flights between London and New York in a day. Every day. It will probably also catch up to whatever energy cost it took to train the AI in the first place and surpass it.

Because you know. People need their memes and fake movies and AI therapist chats and meal suggestions and history lessons and a couple of iterations on that book report they can’t be fucked to write. One person can easily end up prompting hundreds of times in a day without even thinking about it. And if everybody starts using AI to think for them at work and at home, it’ll end up being many, many, many flights back and forth between London and New York every day.

I had the discussion regarding generated CO2 a while ago here, and with the numbers my discussion partner gave me, the calculation said that the yearly usage of ChatGPT is appr. 0.0017% of our CO2 reduction during the covid lockdowns - chatbots are not what is kiling the climate. What IS killing the climate has not changed since the green movement started: cars, planes, construction (mainly concrete production) and meat.

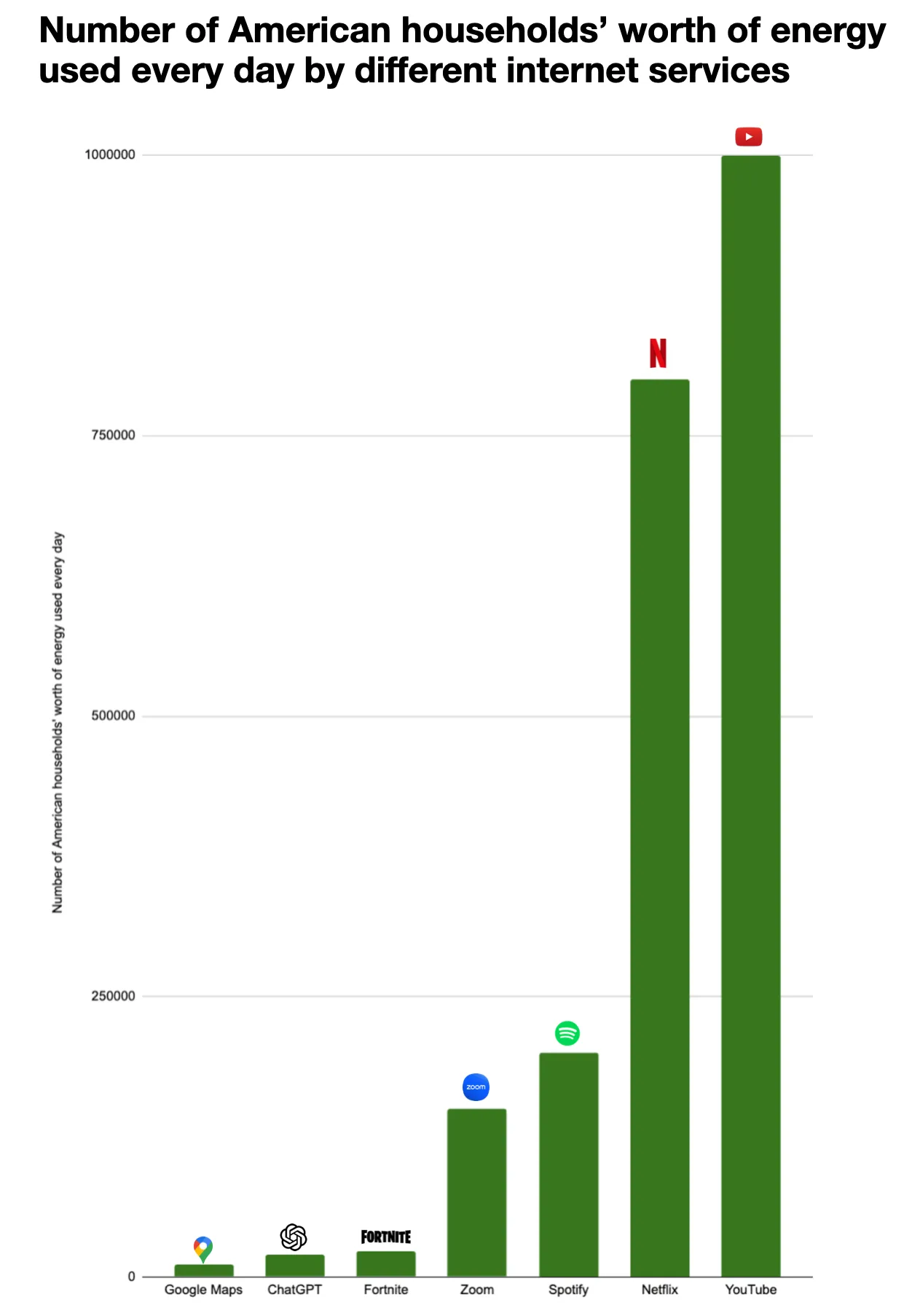

The exact energy costs are not published, but 3Wh / request for ChatGPT-4 is the upper limit from what we know (and thats in line with the appr. power consumption on my graphics card when running an LLM). Since Google uses it for every search, they will probably have optimized for their use case, and some sources cite 0.3Wh/request for chatbots - it depends on what model you use. The training is a one-time cost, and for ChatGPT-4 it raises the maximum cost/request to 4Wh. That’s nothing. The combined worldwide energy usage of ChatGPT is equivalent to about 20k American households. This is for one of the most downloaded apps on iPhone and Android - setting this in comparison with the massive usage makes clear that saving here is not effective for anyone interested in reducing climate impact, or you have to start scolding everyone who runs their microwave 10 seconds too long.

Even compared to other online activities that use data centers ChatGPT’s power usage is small change. If you use ChatGPT instead of watching Netflix you actually safe energy!

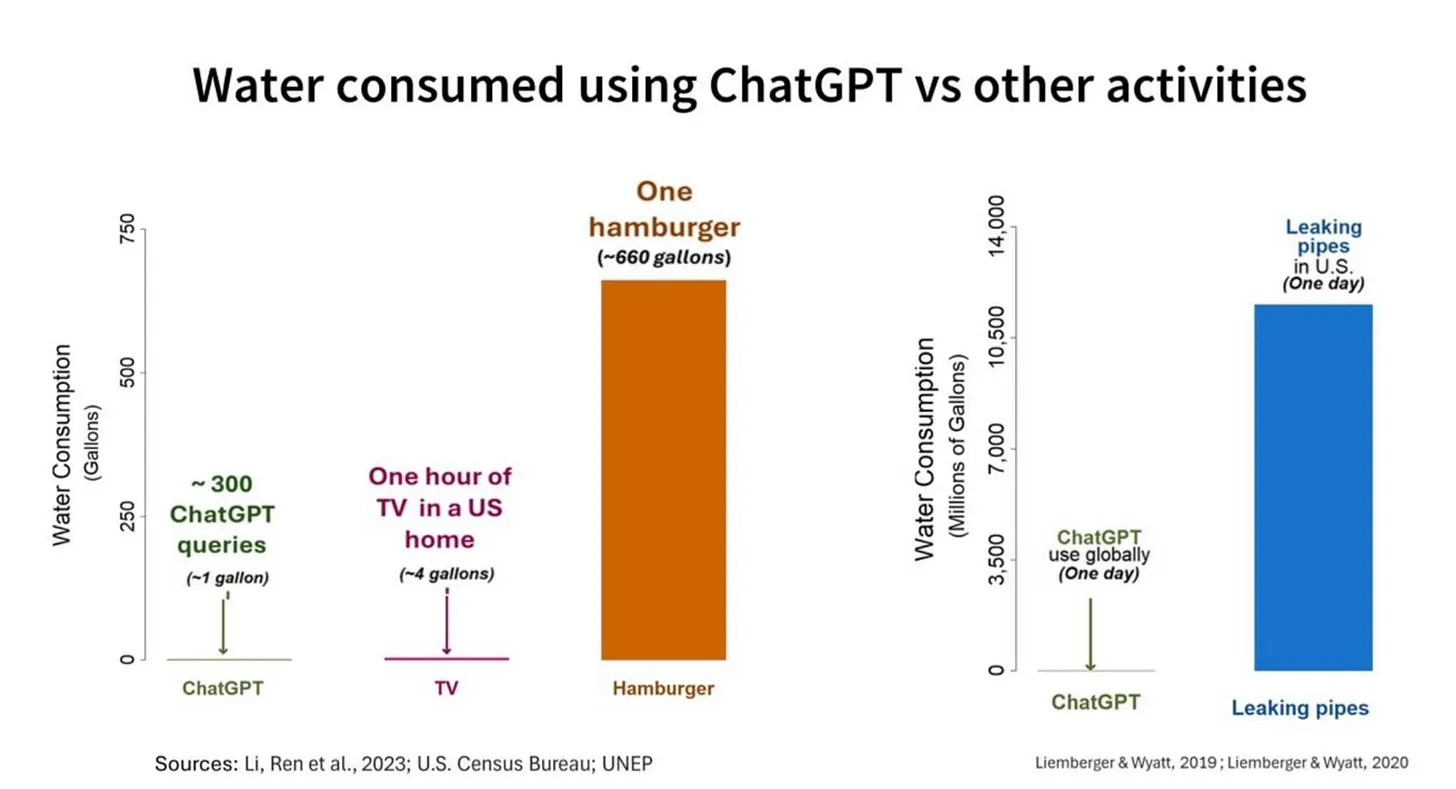

Water is about the same, although the positioning of data centers in the US sucks. The used water doesn’t disappear tho - it’s mostly returned to the rivers or is evaporated. The water usage in the US is 58,000,000,000,000 gallons (220 Trillion Liters) of water per year. A ChatGPT request uses between 10-25ml of water for cooling. A Hamburger uses about 600 galleons of water. 2 Trillion Liters are lost due to aging infrastructure. If you want to reduce water usage, go vegan or fix water pipes.

Read up here!

I have a hard time believing that article’s guesstimate since Google (who actually runs these data centers and doesn’t have to guess) just published a report stating that the median prompt uses about a quarter of a watt-hour, or the equivalent of running a microwave oven for one second. You’re absolutely right that flights use an unconscionable amount of energy. Perhaps your advocacy time would be much better spent fighting against that.

And Google would never lie about how much energy a prompt costs, right?

Especially not since they have an invested interest in having people use their AI products, right?

That’s not really Google’s style when it comes to data center whitepapers. They did, however, omit all information about training energy use.

Ahahah. Not their style to lie and betray people for profit? Get out!

… They’re kind of governed by law about what things they’re allowed to tell their stockholders.

And before you try to say otherwise, yes, laws that protect the ownership class are still being enforced.

Sam Altman, or whatever fuck his name is, asked users to stop saying please and thank you to chatgpt because it was costing the company millions. Please and thank you are the less power hungry questions chatgpt gets. And its costing chatgpt millions. Probably 10s of millions of dollars if the CEO made a public comment about it.

You’re right training is hella power hungry, but even using gen ai has heavy power costs

I’m pretty sure it’s a product of scale, but also, GPT5 is markedly worse. I heard estimates of 40 watt hours for a single medium length response. Napkin math says my motorcycle can travel about kilometer per single medium length response of GPT5. Now multiply that by how many people are using AI (anyone going online these days), now multiply that by how many times a day each user causes a prompt. Now multiply that by 365 and we have how much power they’re using in a year.

It’s important to remember that there’s a lot of money being put into A.I. and therefore a lot of propaganda about it.

This happened with a lot of shitty new tech, and A.I. is one of the biggest examples of this I’ve known about.

All I can write is that, if you know what kind of tech you want and it’s satisfactory, just stick to that. That’s what I do.

Don’t let ads get to you.First post on a lemmy server, by the way. Hello!

Welcome in! Hope you’re finding Lemmy in a positive way. It’s like Reddit, but you have a lot more control over what you can block and where you can make a “home” (aka home instance).

Feel free to reach out if you have any questions about anything

There was a quote about how Silicon Valley isn’t a fortune teller betting on the future. It’s a group of rich assholes that have decided what the future would look like and are pushing technology that will make that future a reality.

Welcome to Lemmy!

Classic Torment Nexus moment over and over again really

Reminds me of the way NFTs were pushed. I don’t think any regular person cared about them or used them, it was just astroturfed to fuck.

Hello and welcome!) Also, thank you for good advice!

Hello!

It’s like Valorant, but much bigger and even worse.

My boss had GPT make this informational poster thing for work. Its supposed to explain stuff to customers and is rampant with spelling errors and garbled text. I pointed it out to the boss and she said it was good enough for people to read. My eye twitches every time I see it.

good enough for people to read

wow, what a standard, super professional look for your customers!

I think that’s exactly what the author was referring to.

Spelling errors? That’s… unusual. Part of what makes ChatGPT so specious is that its output is usually immaculate in terms of language correctness, which superficially conceals the fact that it’s completely bullshitting on the actual content.

FWIW, she asked it to make a complete info-graphic style poster with images and stuff so GPT created an image with text, not a document. Still asinine.

The user above mentioned informational poster so I’m going to assume it was generated as an image. And those have spelling mistakes.

Can’t even generate image and text separately smh. People are indeed getting dumber.