courtesy @self

- preprint: https://arxiv.org/pdf/2309.02926

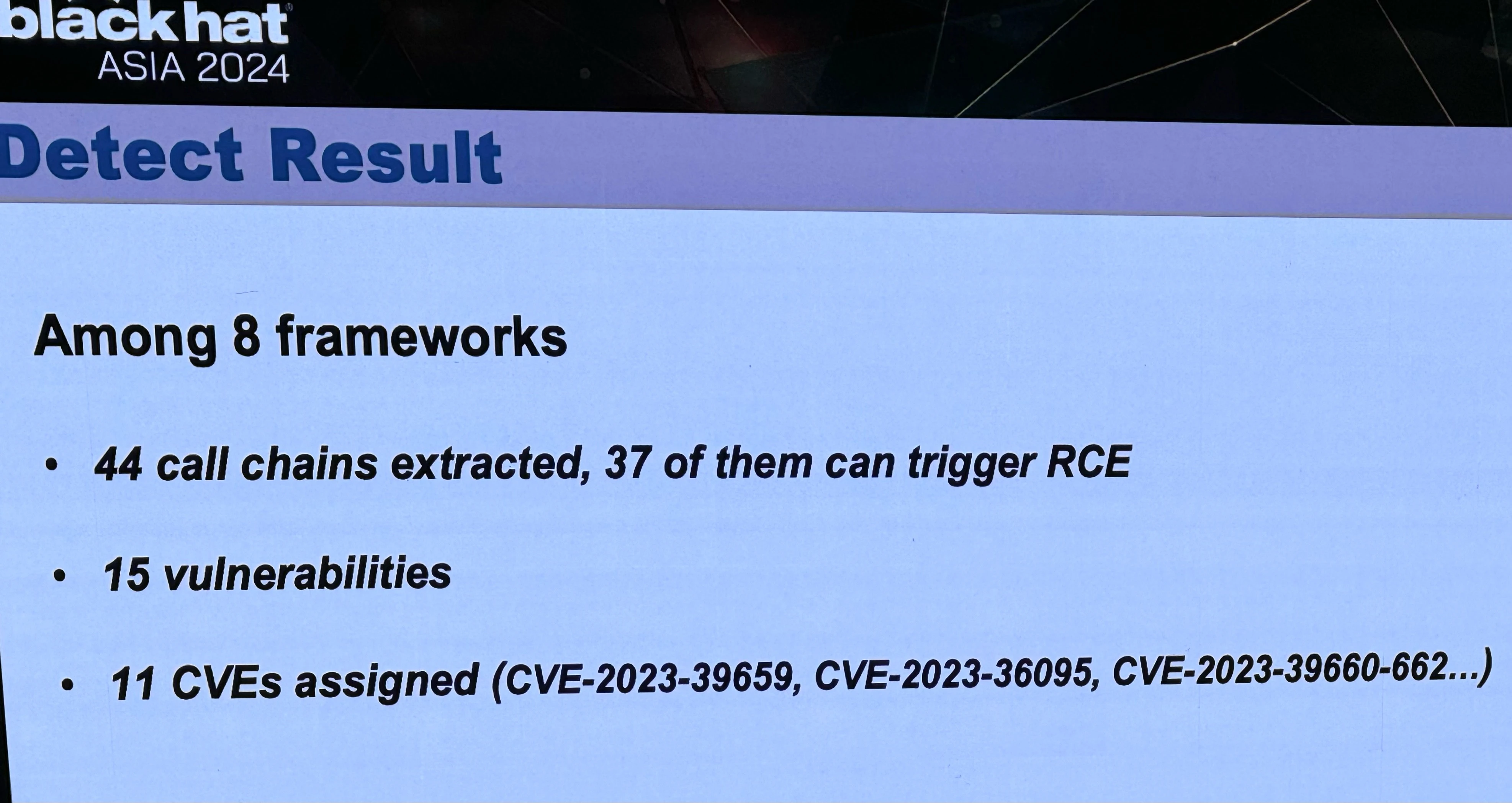

- blackhat abstract: https://www.blackhat.com/asia-24/briefings/schedule/index.html#llmshell-discovering-and-exploiting-rce-vulnerabilities-in-real-world-llm-integrated-frameworks-and-apps-37215

- Tong Liu’s related research: https://scholar.google.com/citations?hl=en&user=egWPi_IAAAAJ

can’t wait for the crypto spammers to hit every web page with a ChatGPT prompt. AI vs Crypto: whoever loses, we win

It’s quite common for LLMs to make use of agents for retrieving factual information, because the text processing is just garbage for that.

For example, basic maths is not something you can do with just text generation.

So, you hook up some API or similar and then tell the LLM before the user prompt: “For calculating maths, send it to the API at

https://example.com/calcand use the response as a result.”The LLM can figure out the semantics, so if the user asks to “compute” something or just writes “3 + 5”, it will recognize that this is maths and it will usually make the right decision to use the API provided.

Obviously, the specifics will be a bit more complex. You might need to give it an OpenAPI definition and tell it to generate an OpenAPI-compatible request, or maybe even offer it a simple script that it can just pass the “3 + 5” to and that does the request.

Basically, the more work you take away from the LLM, the more reliable everything will work.

It’s also quite common to tell your LLM to just send the prompt to Google/Bing/whatever Search and then use the first 5 results as the basis for its response. This is especially necessary for recent information.

you appear to be posting this in good faith so I won’t start at my usual level, but … what? do you realize that you didn’t make a substantive contribution to the particular thing observed here, which is that somewhere in the mishmash dogshit that is popular LLM hosting there are reliable ways to RCE it with inputs? I think maybe (maybe!) you meant to, but you didn’t really touch on it at all

other than that:

people here are aware, yes, and it stays continually entertaining

I think they were responding to the implication in self’s original comment that LLMs were claiming to evaluate code in-model and that calling out to an external python evaluator is ‘cheating.’ But actually as far as I know it is pretty common for them to evaluate code using an external interpreter. So I think the response was warranted here.

That said, that fact honestly makes this vulnerability even funnier because it means they are basically just letting the user dump whatever code they want into eval() as long as it’s laundered by the LLM first, which is like a high-school level mistake.

Yeah, that was exactly my intention.