courtesy @self

- preprint: https://arxiv.org/pdf/2309.02926

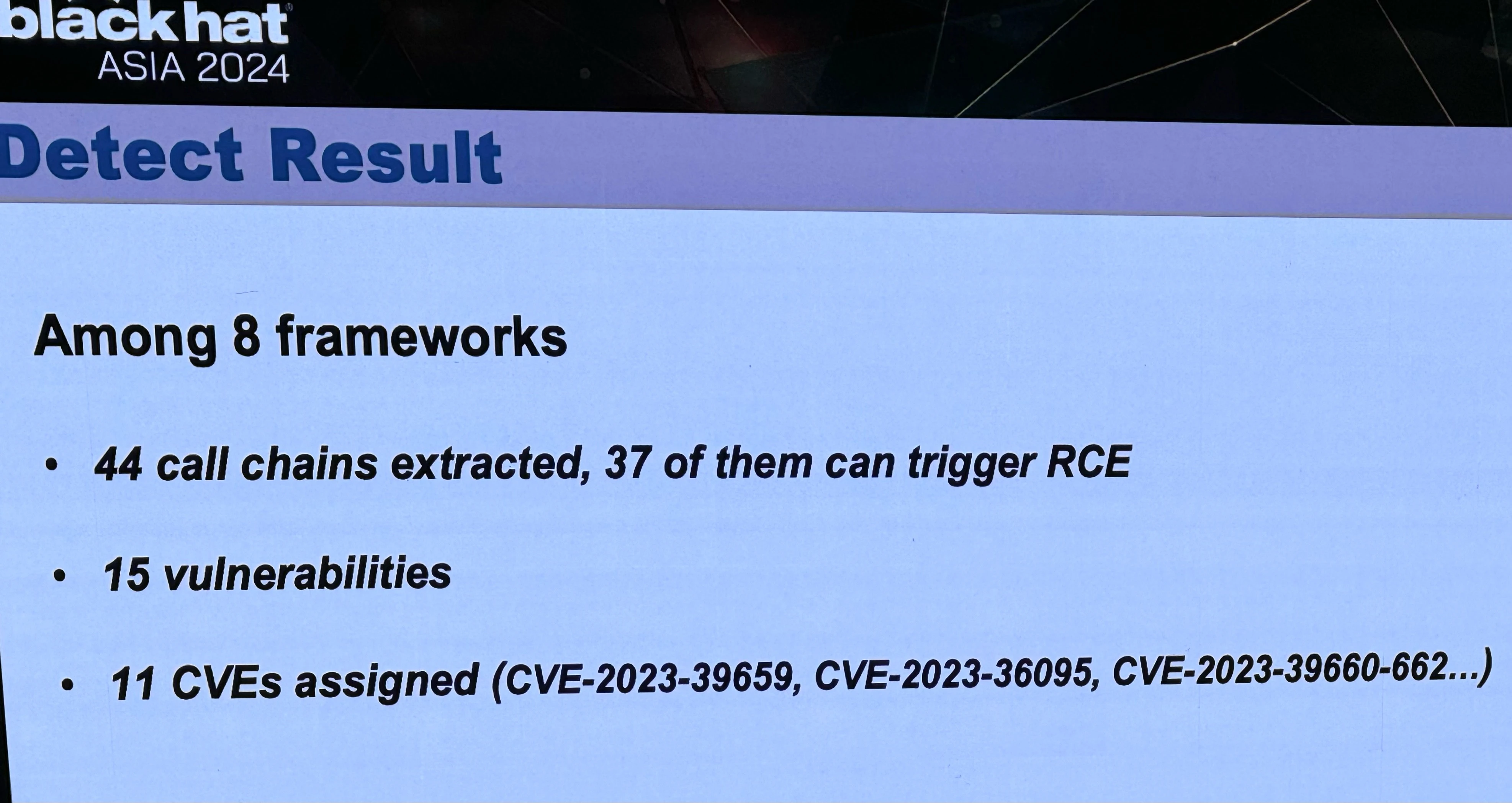

- blackhat abstract: https://www.blackhat.com/asia-24/briefings/schedule/index.html#llmshell-discovering-and-exploiting-rce-vulnerabilities-in-real-world-llm-integrated-frameworks-and-apps-37215

- Tong Liu’s related research: https://scholar.google.com/citations?hl=en&user=egWPi_IAAAAJ

can’t wait for the crypto spammers to hit every web page with a ChatGPT prompt. AI vs Crypto: whoever loses, we win

I think they were responding to the implication in self’s original comment that LLMs were claiming to evaluate code in-model and that calling out to an external python evaluator is ‘cheating.’ But actually as far as I know it is pretty common for them to evaluate code using an external interpreter. So I think the response was warranted here.

That said, that fact honestly makes this vulnerability even funnier because it means they are basically just letting the user dump whatever code they want into eval() as long as it’s laundered by the LLM first, which is like a high-school level mistake.

Yeah, that was exactly my intention.