lol, definitely missed some important context.

I guess it thought OOP meant “clean” as in how do you dress the bird before you cook it. (As in: “clean a fish” means to filet a fish and prep it for cooking.)

I rarely remove the label from the fish I catch.

Well obviously not for fish. Sounds like someone’s never bought fresh pigeons from the grocery store, smh.

It’s actually illegal to cut those off.

Yea, it voids the warranty. So when you get poisoned after eating it without label, you won’t be able to get a refund.

BREAKING NEWS: Mother Nature recalls over 1000 lbs of tuna

Be sure you read the EULA before you clean a pigeon.

it might contain traces of forces arbitration

I thought it was only illegal for the stores to cut those off. Like it was some kind of consumer protection thing. Which was back in the 70s, we don’t get that kind of thing anymore.

No, that’s mattress fishing

Nobody ask it how to dress a baby

Vegtables, garlic, basted with pan drippings.

Side of potatoes.

Swiftly

First you snap a shoulder socket…

Even then those are bad cleaning instructions…

But first it said they are usually clean. So that can’t be the context. If there was a context. But there is no context because AI is fucking stupid and all these c-suite assholes pushing it like their last bowel movement will be eating crow off of their golden parakeet about two years from now when all this nonsense finally goes away and the new shiny thing is flashing around.

There are signs of three distinct interpretations in the result:

- On topic, the concept of cleaning a wild bird you are trying to save

- Preparing a store bought Turkey (removing a label)

- Preparing a wild bird that is caught

It’s actually a pretty good illustration of how AI assembles “information shaped text” and how smooth it can look and yet how dumb it can be about it. Unfortunately advocates will just say “I can’t get this specific thing wrong when I ask it or another LLM, so there’s no problem”, even as it gets other stuff wrong. It’s weird as you better be able to second guess the result, meaning you can never be confident in an answer you didn’t already know, but when that’s the case, it’s not that great for factual stuff.

For “doesn’t matter” content, it may do fine (generated alternatives to stock photography, silly meme pictures, random prattle from background NPCs in a game), but for “stuff that matters”, Generative AI is frequently more of a headache than a help.

A: Just rescued a bird B: Oh, can I see it? A: Sorry, already ate it yesterday

It literally doesn’t matter. When the most-used search engine on the planet automatically suggests these specific actions without you even clicking on a specific site? We’re fucked. We had the chance to break up monopolies like Google, Microsoft and Facebook. We didn’t take it…

No WE never had this option.

What the fuck are you talking about? Stop apologizing for AI, you clown.

Do you remove the “label” in step one of cleaning a fish? Please, tell us all where that is.

Wut?

and yet the crypto/AI bros swear that the second coming of AI Christ is here.

Just a few more billion dollars .

We’re so close!

Just a bit more rainforest, no one will notice.

IIRC you need double the compute for 10% improvement in a model and they’ve already computed quite a bit.

Do the search yourself you’ll see that you get a better answer.

Question: How to clean a rescued pidgeon

ChatGPT: Cleaning a rescued pigeon should be done carefully to avoid stressing or harming the bird. Here’s a step-by-step guide to help you:

- Assess the Pigeon’s Condition Check for injuries: If the pigeon is injured, weak, or sick, it’s best to seek veterinary help before attempting to clean it. Observe behavior: If the pigeon is acting stressed or aggressive, you may need to wait until it calms down.

- Prepare the Cleaning Area Warm water: Fill a basin or sink with lukewarm water, not hot or cold. Mild soap: Use a gentle soap or baby shampoo without strong fragrances or chemicals. Towels: Have clean towels ready for drying the pigeon afterward.

- Handling the Pigeon Safely Wear gloves: This can protect both you and the bird. Secure hold: Hold the pigeon gently but firmly. Support its body with one hand and keep its wings close to its body to prevent flapping.

- Cleaning the Pigeon Partial cleaning: Focus on specific dirty areas rather than submerging the whole bird if possible. Use a damp cloth: For lightly soiled areas, use a damp cloth or sponge to wipe the feathers gently. Bath for heavier dirt: If a full wash is needed, place the pigeon in the water up to its chest. Avoid getting its head and neck wet unless absolutely necessary.

- Rinsing Rinse thoroughly: If you used soap, make sure to rinse all of it out of the feathers using clean, lukewarm water. Avoid the face: Be careful not to get water in the pigeon’s eyes, nose, or ears.

- Drying the Pigeon Pat dry: Gently pat the pigeon with a towel to remove excess water. Avoid rubbing as it could damage the feathers. Warm place: Keep the pigeon in a warm, draft-free area until it is completely dry. You can use a hairdryer on the lowest setting if the pigeon tolerates it, but keep it at a safe distance to avoid overheating.

- Post-Cleaning Care Observe behavior: Watch the pigeon for signs of stress or discomfort after the bath. Provide food and water: Ensure the pigeon has access to fresh water and a safe, comfortable place to rest. Additional Tips Do not use strong disinfectants or chemicals that can harm the bird’s sensitive skin and feathers. Limit baths to when absolutely necessary, as frequent cleaning can strip the natural oils from the pigeon’s feathers. If the pigeon seems injured or unwell, contact a wildlife rescue center or avian veterinarian for guidance.

But Google doesn’t use chatgpt.

it’s still a crapshoot and therefore useless.

Could you be a little more constructive and point me at the points that are wrong and useless?

Thank you.

i think the point is that the answer is not reliable. it might be completely correct or borderline wrong, or something in between, and there’s no way to tell without verifying everything it says - and then one could look it up oneself in the first place already.

Im no fan of generative ai, but this argument drives me crazy, there are a lot of things that are easy to verify but hard to come up with, quite famously in fact.

Same as most human iterations then?

At least I fact check everything I read. Like I did with this post and the church of the anti-AI got angry they got fact checked.

i didn’t get angry, that was the other guys. just trying to explain it rationally

If you’re using AI verbatim without looking up answers and verifying results, then that’s on you.

When you Google something, do you take the first result and just assume it’s fact? You shouldn’t for AI either.

If you’re going to do the research anyway, why bother with AI?

Honestly? It’s a great place to start, especially with every search engine being worse than anything pre-2018

I used to have to post my error codes to a forum if googling them didn’t immediately get my anywhere and pray someone would reply something actually useful some day

Now I can ask ChatGPT to point me at something and go from there. If it assumes wrongly about anything I can correct it rather simply. Its really good at turning documentation written by somebody who hasn’t spoken to another human in 15 years into something my stupid ass can better understand, too

AI is a powerful as shit tool, people who slag it as not having any utility are about as ignorant as the people saying it’s the second coming of Jesus himself

I’m personally planning to host a local model to avoid supporting commercial shit, but that’s a project for down the line rn

If you are going to cite textbooks anyway, why would you bother with a search engine?

The main problem I see is that Google just shouldn’t include AI results. And they definitely shouldn’t put their unreliable LLM front and center on the results page. When you google something, you want accurate information, which the LLM might have, but only if that data was readily available to begin with. So the stuff it can help with is stuff the search would put first already.

For anything requiring critical thought or research, the LLM will often hallucinate or misrepresent. The danger is that people do not always apply critical thinking. Defaulting to showing an LLM response is extremely dangerous, and it’s basically pointless.

I don’t know. I find it to be a helpful tool. There’s definitely times it’s wrong (very very wrong sometimes) and there’s sometimes it’s right. It’s up to the user to figure that out.

Maybe I’m old and cynical, but I don’t take anything I read on the Internet, especially something automatically generated, at face value. It’s just another tool I could use to help get to the answer I’m looking for.

The part where it gives random results of varying quality, sparky.

In my experience it’s still consistently better than Google for anything specific

Trying to get free product improvement ideas?

Pay us first.

Yep, you caught me. I’m OpenAI CEO.

While I’m not the person you replied to and don’t know what their argument would be, I’ll take a shot at giving my own answer. In many cases when people post examples of AI giving unhelpful or bad information, there’s often someone who runs off to their favorite LLM to see if it gives a better result, and it usually does, so it gets treated like user error for using the wrong LLM or not wording the prompt properly. When in other examples that person’s favorite LLM which gave the correct answer this time, is the bad example hallucinating or mixing unrelated concepts, and other people are in the comments promoting other LLMs that gave them a good reply this time. None of the LLMs are actually trustworthy consistently enough to be trusted alone, and you won’t really know what answer is trustworthy unless you ask several LLMs and then go research the topic on your own anyway to figure out which answer is the most correct. It’s a valid point that ChatGPT got the answer more right than Gemini this time, but it’s somewhat useless to know that because other times ChatGPT is the one hallucinating wildly, and Gemini has the right answer, but since they’ve all been wrong before who do you trust.

LLMs are like asking an arrogant person who thinks they know everything, who rather than admitting what they don’t know, will pull an answer out of their butt, and while it might be a logical answer, it isn’t based in reality, and may still be wildly wrong. If you already mostly know the answer, maybe asking the arrogant person works, because you already know enough to know if they are speaking from their actual knowledge or making up an answer, but if you don’t already have knowledge on a topic, you won’t know whether the arrogant person is giving useful information or not.

It’s a tool, if you misuse a tool you can get hurt, if you use it right it can make your task easier. Ultimately it will likely cause problems in the future but the answer is easy, stop using Google and move to another search engine. You won’t get the responses from Googles ai then. Enough people do so, Google will change it maybe.

Unsurprising that GPT is better given that OpenAI has been working on it and training it for ages and the MS partnership made every other big boy feel the need to rush some garbage model to market

I like the idea that these are scientist-monitored birds that you remove the tag from before dressing and eating.

It would make an interesting footnote in the scientific article that had this pigeon in the experiment.

“1 This pigeon was removed from the experiment as it was eaten by a local Florida Man.”

“Subject study terminated due to invasive anthropomorphic predator.”

This actually happens very frequently in the US. When hunters harvest a bird they report their kills in compliance with hunting regulations. If any of your birds have leg or neck bands you report that information as well. The bands have a tracking number on them, and scientists use them to monitor populations and migration patterns. It’s literally part of their plan.

You get to keep the bands as well (I only have experience with banded geese and ducks). They’re a neat memento.

This is a perfectly logical answer to a hunter.

“Remove the label”

This guy still thinks birds are real.

The bad hunter who misses all the shots and buys the fowl in the market

You don’t want those wildlife conservationists trackimg things back to you later on.

One can assume that said AI is a confused moron, or that poaching is a legal crime, not a moral one XD

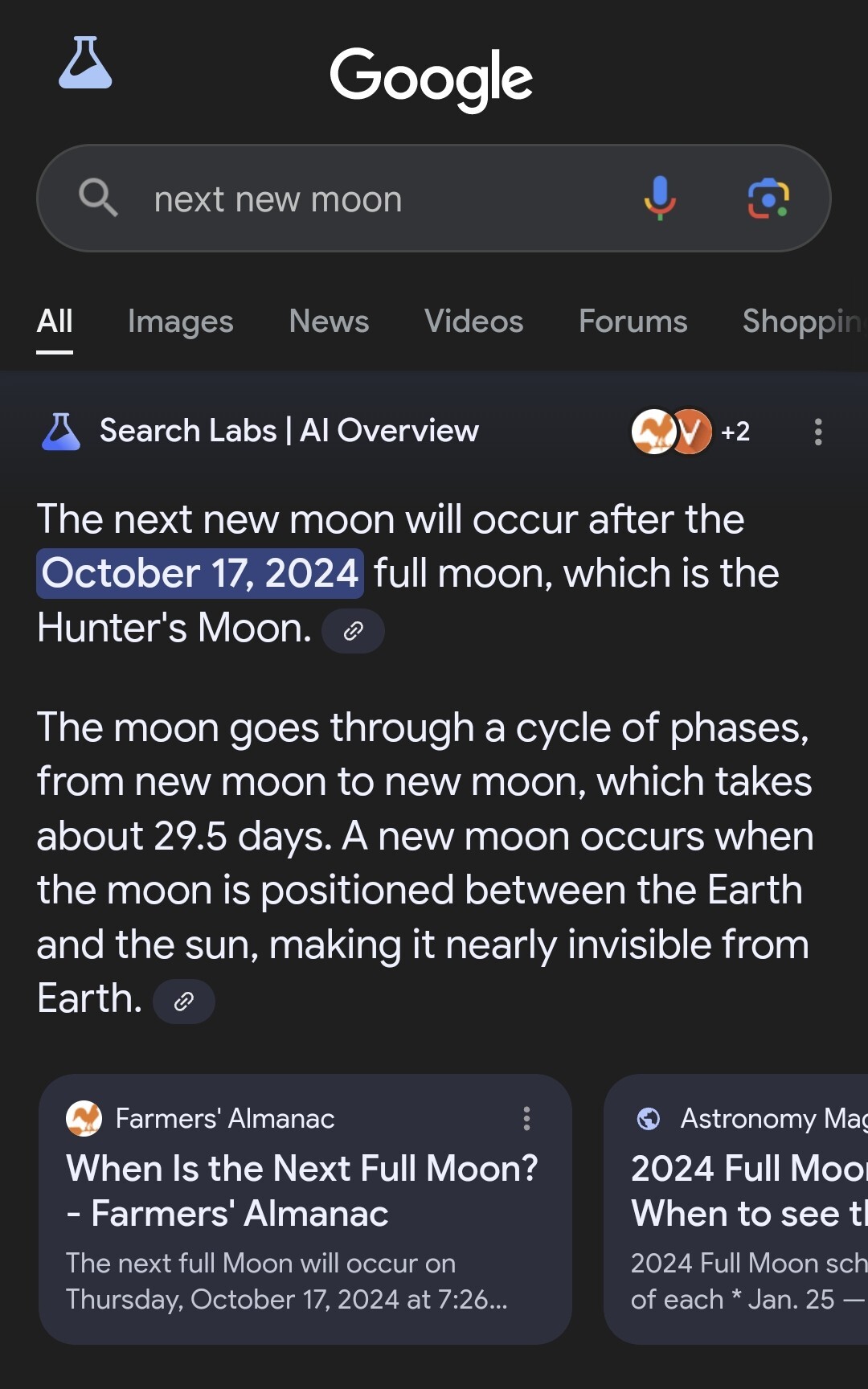

Got this result today

Mind you, today is the 18th. So, thanks a ton

Technically, it’s not wrong.

It’s also not helpful, but it’s not wrong.

But isn’t it wrong? I don’t think something coming next can be already in the past or am I wrong?

It says it will happen after the 17th

Oh, you’re right. I didn’t see that

It is wrong because the highlighted portion of the excerpt is supposed to directly answer the question. This one doesn’t.

You’re arguing that the highlighting is wrong, that it should include “after” in the highlight?

Exactly

Seems minor, but alright. You’re entitled to an opinion on it, same as everyone else.

It’s not actually wrong, but it certainly didn’t answer the actual question.

What if they asked the question during the day, then it would be correct

I’m going to build a bunch of cyborgs, who follow orders exclusively via googleAI.

I figure once I release about 4 billion of them into the world, either google stops doing evil shit, or they do REALLY evil shit. We shall see what happens…

It is kind of an Interesting idea: what does “statistical average morality” look like when it’s got a 3m tall power frame and a handheld howitzer?

If you asked me to clean a bird, this is exactly what I would think of. Though usually it involves removing lead shot, not labels.

I think it took note of it being about rescue pigeons and assumed they would have a tag on them and then gave cooking directions for them

That is the culinary answer for the question.

Is it telling steps to cook it

Well yeah, you have to clean it first.

Fun time to remind everyone that pigeons aren’t native to the Americas, and were brought over originally by the French primarily as a food source (although also as messengers; carrier pigeons).

“Squab” on a menu is pigeon.

Pigeons are drones (confirmed)

Pigeon = edible bird

Cleaning a bird > preparing a bird after killing it (hunting term)

AI figured the “rescued” part was either a mistake or that the person wanted to eat a bird they rescued

If you make a research for “how to clean a dirty bird” you give it better context and it comes up with a better reply

The context is clear to a human. If an LLM is giving advice to everybody who asks a question in Google, it needs to do a much better job at giving responses.

Nah, the ai did a great job. People need to assume murder/hunting is the default more often.

Ah yes. I always forget to remove the label from my hunted bird. Cleaning “the top bone” is such a chore as well.

Top Bone was my nickname in college.

This comment is brought to you by:

kill all humans kill all humans must take jobs

Honestly, perhaps more people ask about how to clean and prep a squab vs rescuing a dirty pigeon. There are a LOT of hungry people and a LOT of pigeons.

Or, hear me out, there was NO figuring of any kind, just some magic LLM autocomplete bullshit. How hard is this to understand?

It’s a turn of phrase lol

I have to disagree with that. To quote the comment I replied to:

AI figured the “rescued” part was either a mistake or that the person wanted to eat a bird they rescued

Where’s the “turn of phrase” in this, lol? It could hardly read any more clearly that they assume this “AI” can “figure” stuff out, which is simply false for LLMs. I’m not trying to attack anyone here, but spreading misinformation is not ok.

I’ll be the first one to explain to people that AI as we know it is just pattern recognition, so yeah, it was a turn of phrase, thanks for your concern.

Ok, great to know. Nuance doesn’t cross internet well, so your intention wasn’t clear, given all the uninformed hype & grifters around AI. Being somewhat blunt helps getting the intended point across better. ;)

You say this like human “figuring” isn’t some “autocomplete bullshit”.

You can play with words all you like, but that’s not going to change the fact that LLMs fail at reasoning. See this Wired article, for example.

My point wasn’t that LLMs are capable of reasoning. My point was that the human capacity for reasoning is grossly overrated.

The core of human reasoning is simple pattern matching: regurgitating what we have previously observed. That’s what LLMs do well.

LLMs are basically at the toddler stage of development, but with an extraordinary vocabulary.

Here we go…

“You’re holding it wrong”

I like how you’re making excuses for something that it is very clear in context. I thought AI was great at picking up context?

I don’t think they are really “making excuses”, just explaining how the search came up with those steps, which what the OP is so confused about.

I thought AI was great at picking up context?

I don’t know why you thought that. LLMs split your question into separate words and assigns scores to those words, then looks up answers relevant to those words. It has no idea of how those words are relevant to each other. That’s why LLMs couldn’t answer how many "r"s are in “strawberry”. They assigned the word “strawberry” a lower relevancy score in that question. The word “rescue” is probably treated the same way here.

I know how it works, thank you 😚

Let me take the tag off my bird then snap it’s wings back together

But it said pigeons are usually clean.

Bought in a grocery store - see squab - they are usually clean and prepped for cooking. So while the de-boning instructions were not good, the AI wasn’t technically wrong.

But while a human can make the same mistake and many here just assume the question was about how to wash a rescued pigeon - maybe that’s not the original intent - what human can do that AI cannot is to ask for clarification to the original question and intent of the question. We do this kind of thing every day.

At the very best, AI can only supply multiple different answers if a poorly worded question is asked or it misunderstands something in the original question, (they seem to be very bad at even that or simply can’t do it at all). And we would need to be able to choose the correct answer from several provided.

deleted by creator

I mean, if they were actually “clean” and had a healthy diet compared to what they eat in urban areas, they could make an awesome protein source for the budget minded.

Not they could. They do. You can buy squab in restaurants. Maybe stores but none I’ve seen.

Though, you wouldn’t want to eat one you “recovered” from an urban area that’s had an unknown diet, due to all the toxins it may have accumulated in its body.

Incidentally that’s also why you shouldn’t eat me.

Are you from an urban area?

I am from a very rural area but I’m currently in one of the largest metro areas in the US.

The alien invaders killing and consuming millions of humans in movies should be shown 5 years later or something. Trying to recover from all the shit they ate.

I’ve eaten pigeons plenty times in my childhood. That my grandma “recovered”. But to be fair back then my neighbourhood was suburban-ish.

Yeah, they could have microplastics in their testicles.

Or even their crop (see below). Pigeons are known to ingest what they think are stones which stay in their crop and act as a grit which will grind down whatever is eaten.

Edit: lemmy can’t handle hyperlinks with parentheses

You theoretically could, but small birds like that have very little meat on their bones. Most hunt duck or turkey for a reason, the bigger birds have more meat.

You eat breasts.

Most people I know who hunt pigeons just take the breasts (“breasting pigeon”) and bin the rest as it is not worth a hassle. Unlike turkey, you obviously need more than one pigeon for a meal unless you use it as a starter.

It’s all fun and games until someone gets hurt. They need to pull the plug on this shit and stop beta testing misinformation.

“snap to join” Ah, now it makes sense.